prompt, and refine

AI Foundations in Wappler

See how Wappler AI works in practice with a concrete cross-stack example, Ask vs Act choices, and the review loop that keeps results trustworthy.

Introduction: Wappler AI works at the application layer

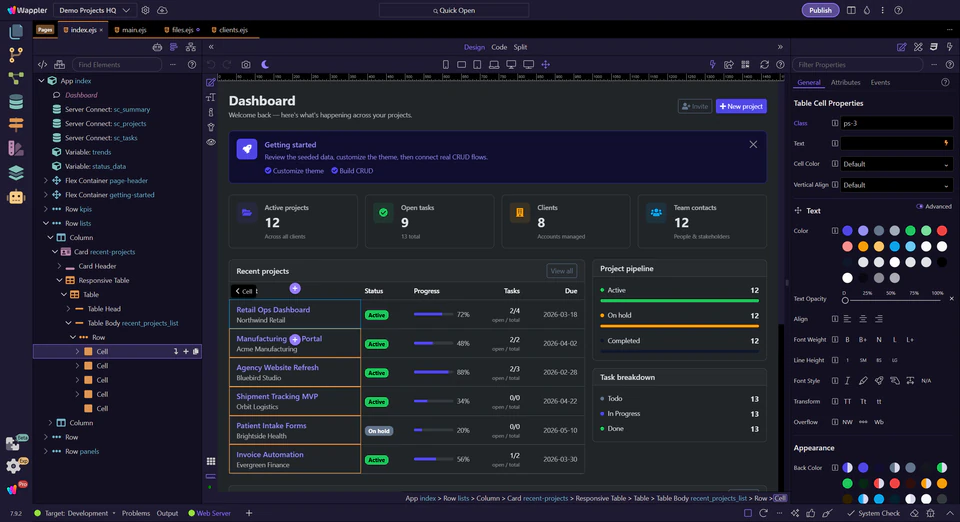

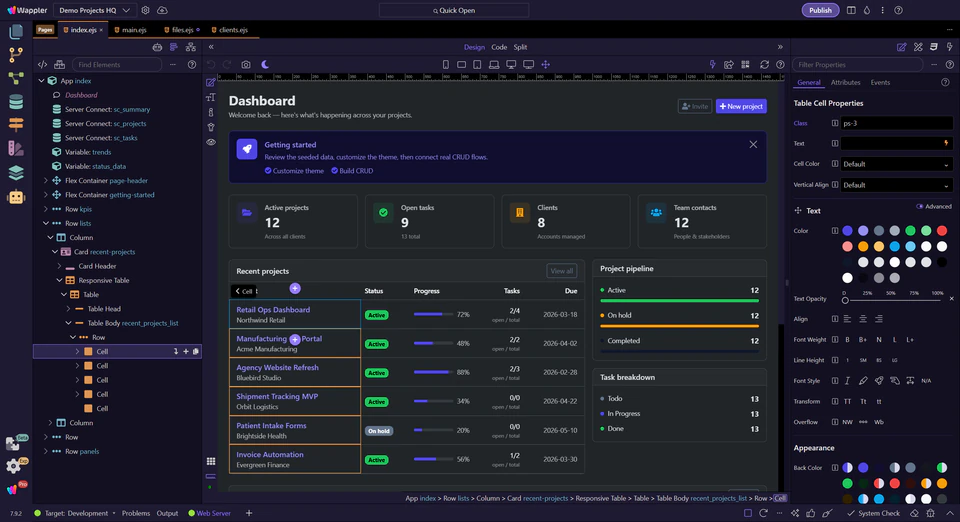

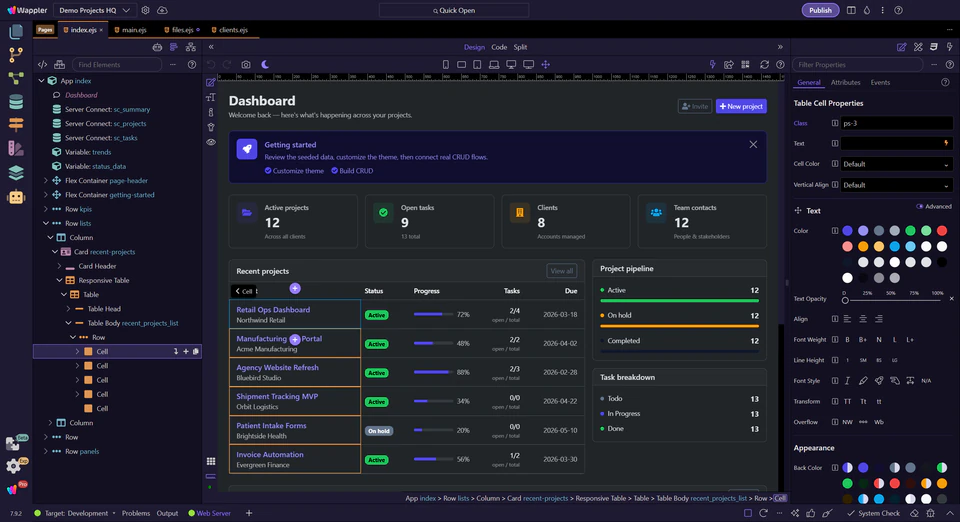

The practical difference in Wappler is that AI can reason about a real feature across the surfaces that already exist in the product. Think about one concrete task: a Bootstrap 5 feedback page with a form, a save action, and an admin list for reviewing submissions. In Wappler, the AI Manager can plan that as one connected application job instead of treating page markup, backend logic, and validation as unrelated code fragments.

prompt, and refine

in Wappler editors

the same feature outcome

real owning surfaces

"Build a Bootstrap 5 feedback flow with a public page, a styled form, App Connect submit and success states, a Server Connect save action, and an admin review view for submissions. Plan the page structure, bindings, validation response, and the checkpoints I should review after each pass."

Use Ask to shape the work, then Act to build the next slice

Ask and Act are most useful when you use them on the same concrete task. For the feedback feature, start in Ask when you want the AI Manager to clarify the plan, identify the pages and handlers involved, or explain the safest sequence. Switch to Act when one slice is clear enough to generate or change directly.

risks, and order of work

once the outcome is precise

Ask example "Before changing anything, outline the page sections, App Connect bindings and form states, the Server Connect action, and the validation rules needed for this feedback flow. Then tell me the safest first slice."

Act example “Now implement only the first slice: create the Bootstrap 5 page structure, add the feedback form fields, and wire the App Connect loading and success states. Leave the Server Connect save action for the next pass.”

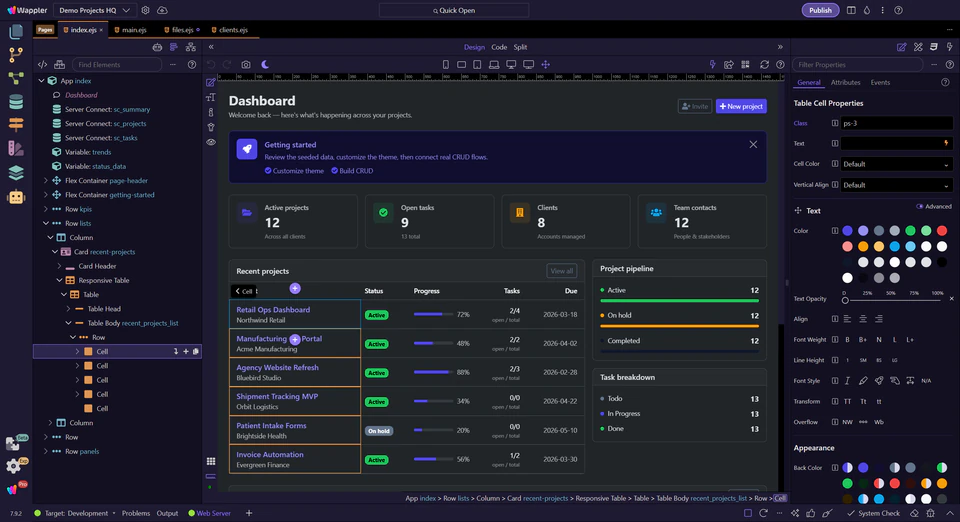

Use the AI Manager session tools to keep longer work understandable

Longer AI sessions stay usable when you keep the conversation anchored to the current slice. Prompt history helps you reuse a good brief, image attachments help when layout is easier to show than explain, the context meter tells you when the chat is getting too large, and touched files or restore points tell you what actually changed.

not the whole noise trail

adds real layout signal

a summary or a new task

restore safely if needed

Good follow-up when a session gets large

"Summarize the accepted decisions so far for the feedback feature, list the files or surfaces already touched, and suggest whether I should continue this thread or start a new task for the admin review page."

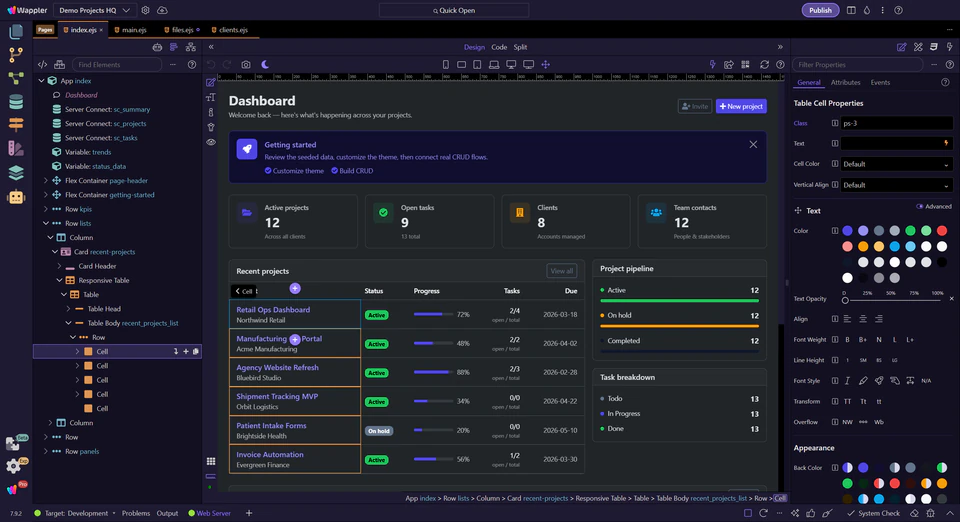

Stay in Wappler while the task is still about the app

The practical rule is simple: stay as high in the stack as you can while the job is still about the application. If you are shaping pages, forms, data contracts, Server Connect flows, or editor-visible structure, the AI Manager is the better control surface. Drop to a code-first AI only when the task becomes genuinely low-level or external to Wappler’s model.

server actions, review

code work

structure, workflow, or UX

Continue with Wappler AI

Move from the mental model into your first practical result or back into the beginner route.

Choose the next AI tour

Continue from the foundations into a first real feature or a wider AI route.