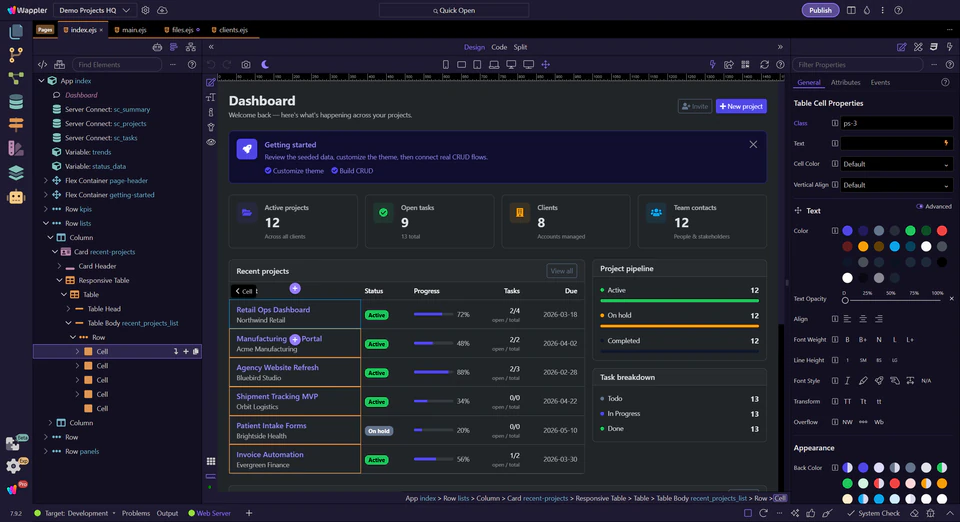

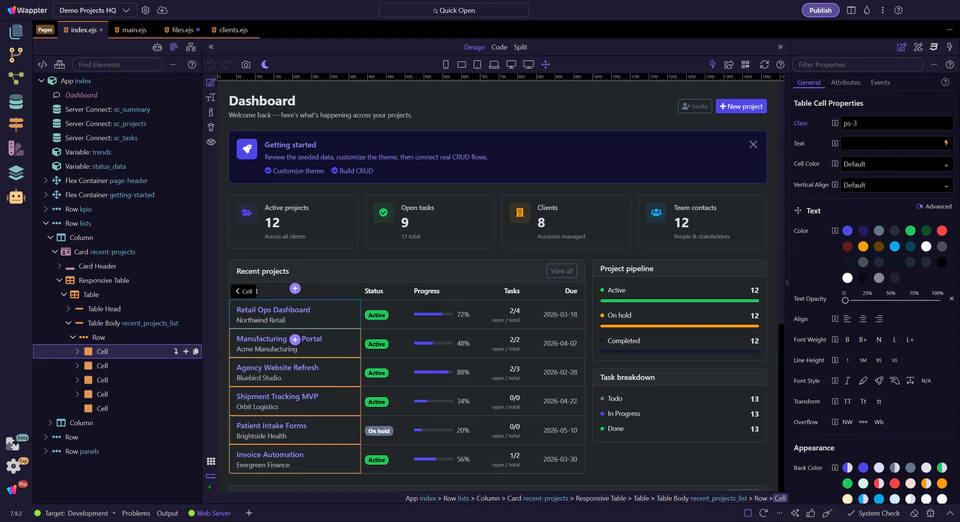

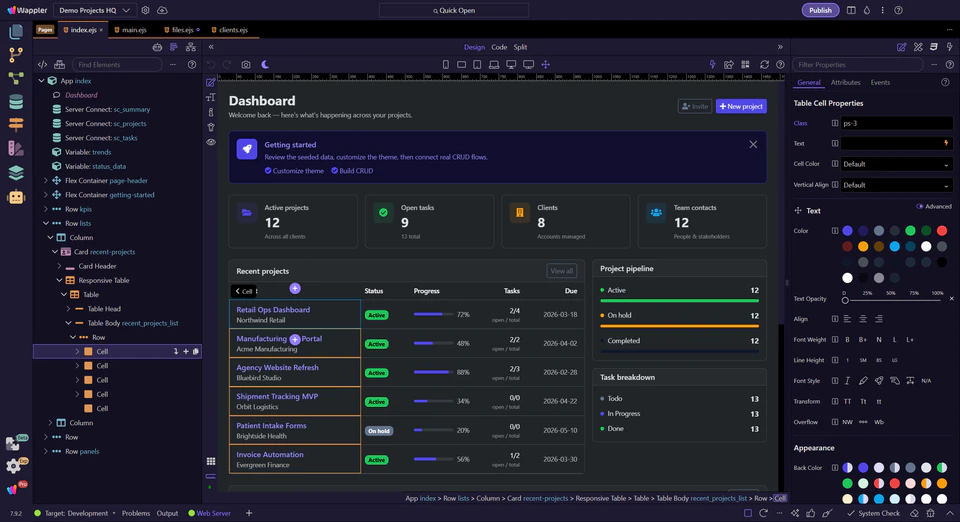

app slice should change

Agentic Generation, Context, and Verification

Advanced AI Manager workflows for larger tasks with bounded prompts, on-demand knowledge, context control, recovery, and verification.

Introduction: give the AI Manager a large but bounded application job

Advanced Wappler AI work is not about giving the model unlimited freedom. It is about giving the AI Manager a larger, still bounded job and keeping the review loop visible. A good example is extending the feedback feature into an admin moderation workflow: public submission, internal review dashboard, status changes, and verification after each slice.

app slice should change

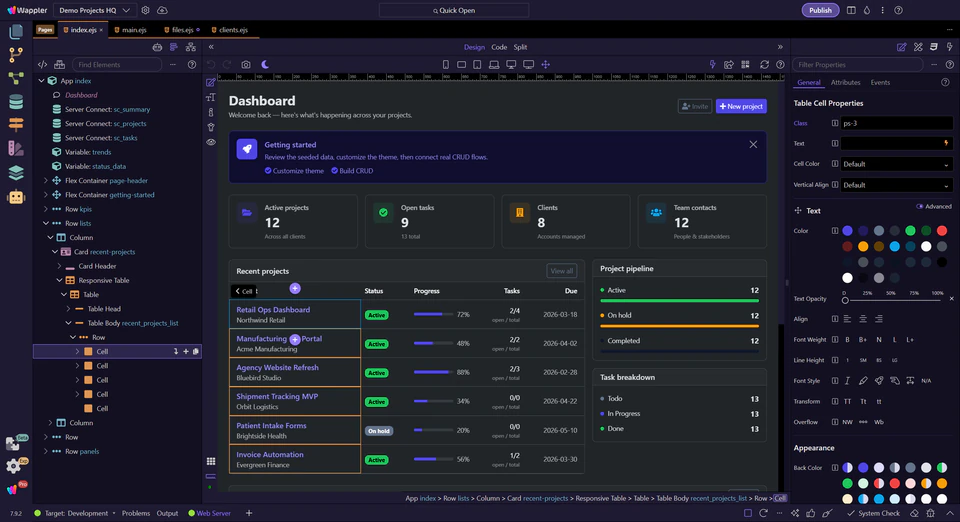

guidance for the task

and summarize intentionally

before widening scope

Advanced bounded prompt

"Extend the feedback feature into an internal moderation workflow. Add an admin review page with filters and status changes, keep the existing public form stable, and break the work into slices with validation checkpoints after each pass. Do not redesign unrelated pages."

On-demand knowledge keeps the request tighter and the token use smarter

Wappler AI now works with on-demand knowledge instead of stuffing every possible rule into every session up front. That means the AI can bring in the relevant App Connect, Flow Connect, Server Connect, project, framework, and instruction context when it is actually needed.

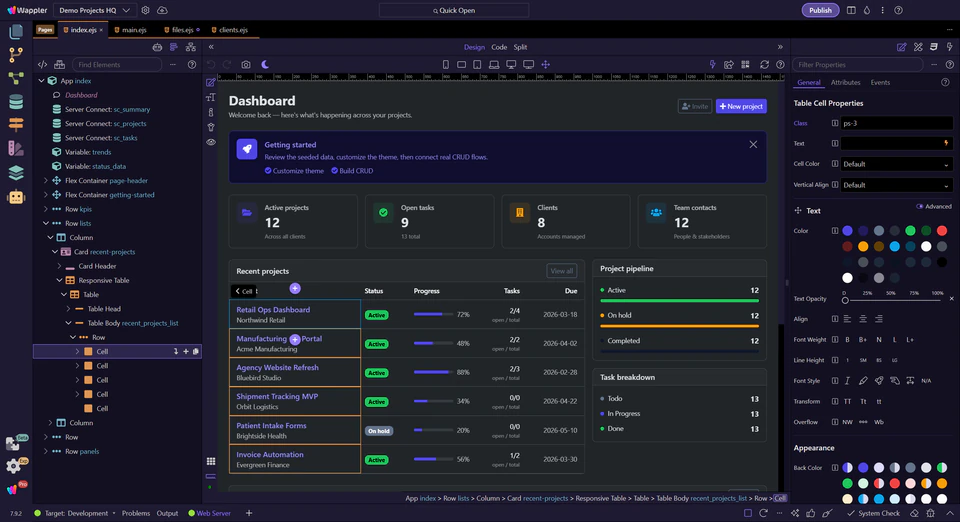

guidance when needed

only when the task needs it

for server-side work

frameworks, and local guidance

Large tasks work when you specify outcome, boundaries, and checks

A strong advanced prompt names the desired outcome, the slice of the app that may change, the parts that must stay stable, and the checks that will prove success. For the moderation example, say that the public feedback page must remain stable while the admin review workflow grows around it.

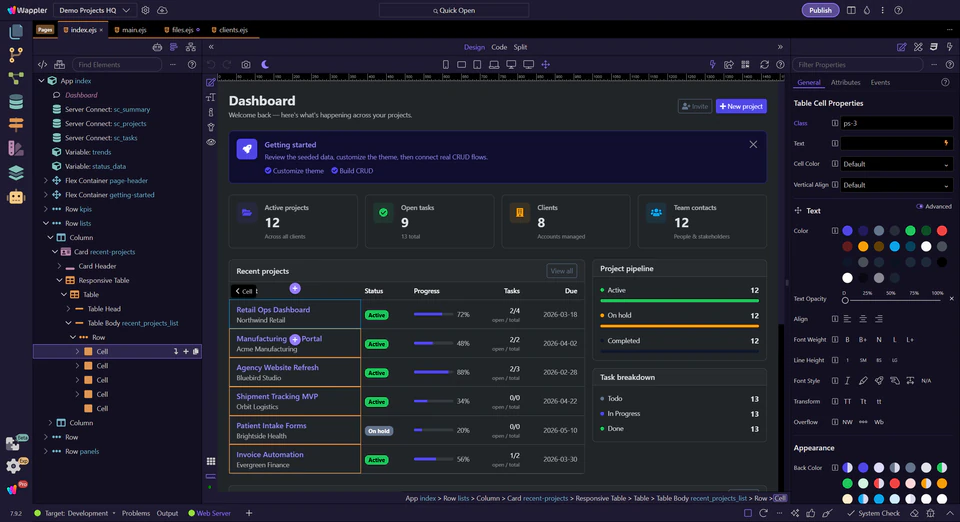

that should change

or untouched

or runtime proofs

Outcome + boundary prompt

"Add moderation controls to the admin feedback area only. Keep the public feedback page and its submit flow unchanged. After each pass, tell me which files changed, what validation or review I should run, and what still remains for the next slice."

Watch context growth, then reset intentionally

Use the context meter, summaries, prompt history, and New Task deliberately. Large agentic work accumulates decisions quickly, so treat context as a resource. Reuse the accepted brief when it still fits, but start a fresh task when the objective has shifted from the public feedback flow to, for example, a separate admin analytics view.

is growing large

without dragging every turn

without reopening noise

when the job changes

Context-reset prompt

"Summarize the accepted decisions for the moderation workflow so far, list the files already touched, and tell me whether the next request belongs in this task or should start as a new task because it changes scope."

Use restore points and touched files as the safety rail

The practical safety advantage in Wappler is not just that the AI can change files. It is that you can see what changed, restore a previous state, and keep going from the last verified slice. For larger agentic work, treat touched-file review and restore points as part of the method, not as an emergency-only fallback.

after each pass

state when needed

slice, not from confusion

Recovery-oriented prompt

"Use the last verified moderation checkpoint as the reference. If the current pass moved too far away, restore the better state and then fix only the status-change flow. List the touched files again after the correction so I can re-review them."

Continue with the advanced Wappler AI loop

Use the next tours to compare positioning or move back into the practical builder routes.

Choose the next advanced AI tour

Continue with comparison or move back into the practical builder routes.